The Best DevOps (Development and Operations) Tools You Should Opt For

-

Ankit Patel

- November 23, 2018

- 5 min read

DevOps was said to be a turning point in the IT industry. It was conceptualized on the philosophy to bring the “development” and “operations” personnel and processes together so that a stable operating environment was formed. This helped to increase the operation speed and reduce errors thus optimizing costs, improving resource management, and enhancing the final product.

DevOps encourages communication, automation, and collaboration among the development and operation personnel so that the speed and the quality of the final output are increased.

DevOps uses tools in various stages so that the automation helps in faster and better quality output. The tools can be categorized for configuration management, application deployment, version control, monitoring and testing, and building systems.

In DevOps, the main stages are

• Continuous integration

• Continuous delivery

• Continuous deployment

Even though the tools can be interchanged at the three stages, some specific tools are required as you progress through the delivery stage. Hence, there is no specific tool that can be used in a particular stage.

Here is a list of some of the best tools available to use in a DevOps process:

1. Source Code Repository:

A source code repository in DevOps has prime importance. Here, the coding is checked and changed, if necessary. The various versions of the code written by the team of coders are checked here so that there is no overlap of each other’s work. The source code repository forms the main component of continuous integration.

• Git – It is the core component of DevOps and is open source software. It is used for version control and helps to maintain versions of the developer’s codebase. The benefit of version control is that you have the option to version your software, share it, have backups and merge it with other developer’s codes. With Git, changes made to the code can be easily tracked. When a code is completed, the coder commits and it gets stored in the local repository. It gets stored in Git after the coder pushes the code. When changes are to be made, the pull and the update are possible with Git.

• TFS – Microsoft Team Foundation Server (TFS) has a version control called the Team Foundation Version Control for source code management. It can also be used for reporting, project management, testing, build automation, and release management.

• Subversion – Also known as SVN, it is a version and source control tool developed by Apache Foundation. More used for Linux and other Unix variants, it is a centralized hub of code repositories.

2. Build Server:

Here the process of code execution takes place. The codes stored in the source code repository are compiled using various automation tools and converted into executable codes.

• Jenkins – Jenkins is a famous open source automation tool used for the continuous integration stage in DevOps. It integrates the source code repositories like Git, SVN. Jenkins detects the changes that have occurred in the source code repository when a coder commits the code. It prepares a new build and deploys it in the test server. After the whole code creation, the Jenkins CI pipeline runs the code on the server and checks for errors. If the code fails in the test, then the concerned admin is notified.

• Artifactory – This binary repository manager by JFrog is used to store binary artifacts. It stores the outcome of the build process usually individual application components that can be assembled into a full product. It has the same features as the above Jenkins.

• SonarQube – This open source tool is used for managing the code quality like architecture and design, unit tests, duplications, coding rules, comments, bugs, and complexities. One of its benefits is its extensibility which allows covering new languages using plugins.

3. Configuration Management:

This relates to the configuration of a server or an environment.

• Ansible – This open source automation platform helps with the configuration management, task automation, IT orchestration and application deployment. It doesn’t use a remote host or an agent like in Puppet and Chef. It uses SSH that is installed on all the systems that are to be managed. It helps to create a group of machines and configure them. All the commands are issued from a central location to perform the tasks. It uses a simple syntax written in YAML called playbooks. If a new version of software is to be installed, then list the IP addresses of the nodes in the inventory and write a playbook to install the new version. When the command is run from the control machine, the new version gets installed on all the nodes.

• Puppet – This Infrastructure as a Code (IAC) tool is an open source software configuration tool. The configuration from different hosts is stored in the Puppet Master. The host or the Puppet Agent connects through SSL. When there is a change required, the Puppet Agent connects to the Puppet Master. The Facter tool submits the complete detail of the Puppet Agent to the Puppet Master. With this information, the Puppet Master decides how to apply the configuration.

• Chef – This is used to streamline the task of configuring and maintaining the servers. It helps in integration with cloud-based platforms. Just like in Ansible playbook, the user writes ‘recipes’ describing the actions to be performed like configuration and application management. These may be then grouped together as a ‘cookbook’. Chef configures all the resources properly and also checks for any errors.

4. Virtual Infrastructure:

The virtual infrastructure has APIs that allows the DevOps team to create new machines with the configuration management tools. The cloud vendors provide these that sell platform as a service (PaaS). By combining automation tools with the virtual infrastructure, a server can be configured automatically. Also, testing of the newly written code and building of an environment is possible on the virtual infrastructure.

• Azure DevOps – This Microsoft product has Azure Boards, Azure Repos, Azure Pipelines, Azure Test Plans, and Azure Artifacts. With Azure Repos, you can have unlimited cloud-hosted private Git source code repositories. Azure Pipelines is for continuous integration and continuous delivery. Azure Test Plans is for test management. Azure Artifacts is to add artifacts to CI/ CD pipelines. The Azure Boards is to plan, track and discuss work across teams.

• Amazon Web Services – This cloud service has AWS CodePipeline, AWS CodeBuild, AWS CodeDeploy, and AWS CodeStar. AWS CodePipeline is used for CI/CD processes to build, deploy, and test the codes. The AWS CodeBuild compiles and tests the source code. It processes multiple builds simultaneously. AWS CodeDeploy automates code deployment to enable faster new releases. AWS CodeStar provides for a unified user interface to deploy applications.

5. Test Automation:

Test automation in a DevOps process is not at the last stage. The automated testing is done right from the build stage itself so that by the time your code is ready to be deployed, it is error-free. But human intervention may be required unless you have an extensive automated testing tool wherein you are fairly confident that the code can be deployed without manual testing.

• Selenium – This open source suite is a set of software tools for automated testing of web applications.

• Watir – It is made of Ruby libraries to automate web application testing.

Conclusion

There are hundreds of tools available in the market and your preference should be which suits your company’s requirements.

You may also like

How to Choose the Right Mobile App Development Company

-

Ankit Patel

Imagine this: you’ve got a brilliant app idea that could revolutionize your business, take it to new heights, and transform your entire customer experience. But without the right team to… Read More

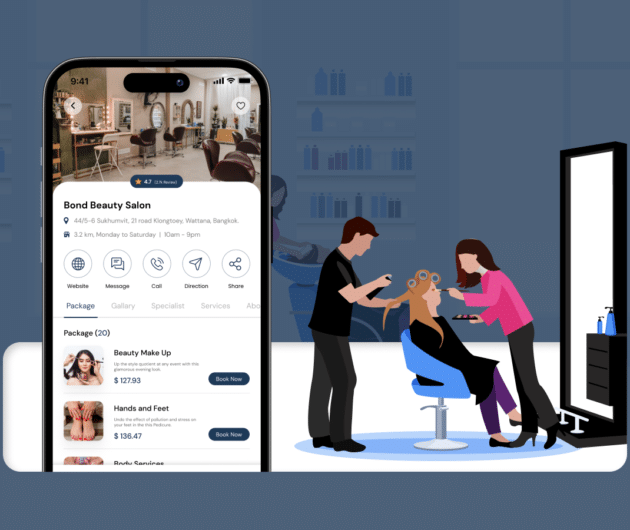

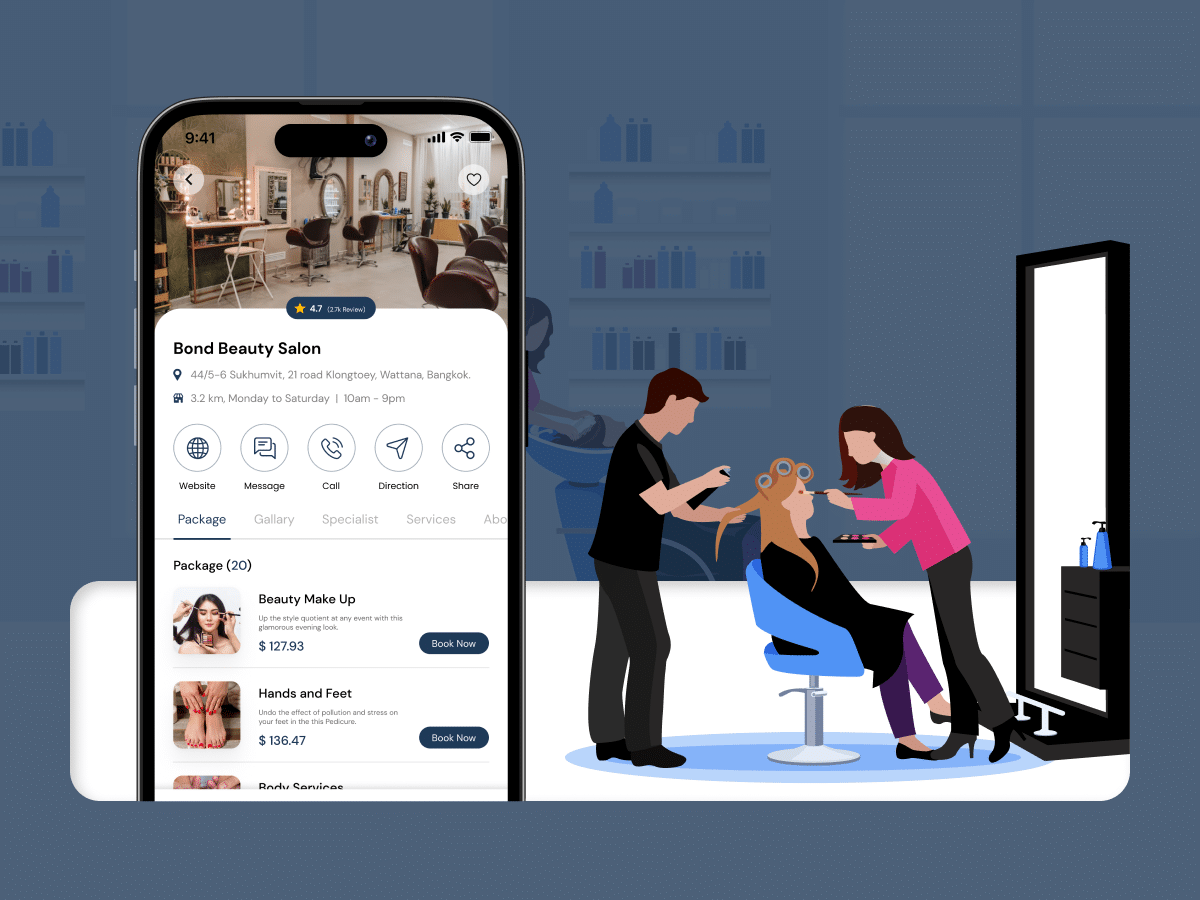

How Much Does it Cost to Build a Salon Booking App like Fresha?

-

Ankit Patel

We all have witnessed the buzz in the world of beauty & wellness, and it’s booming every day thanks to the fast-paced and stressful lifestyle. In an era where time… Read More

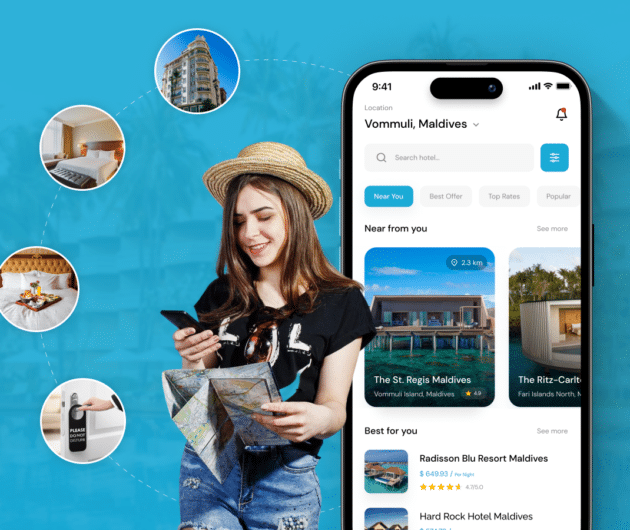

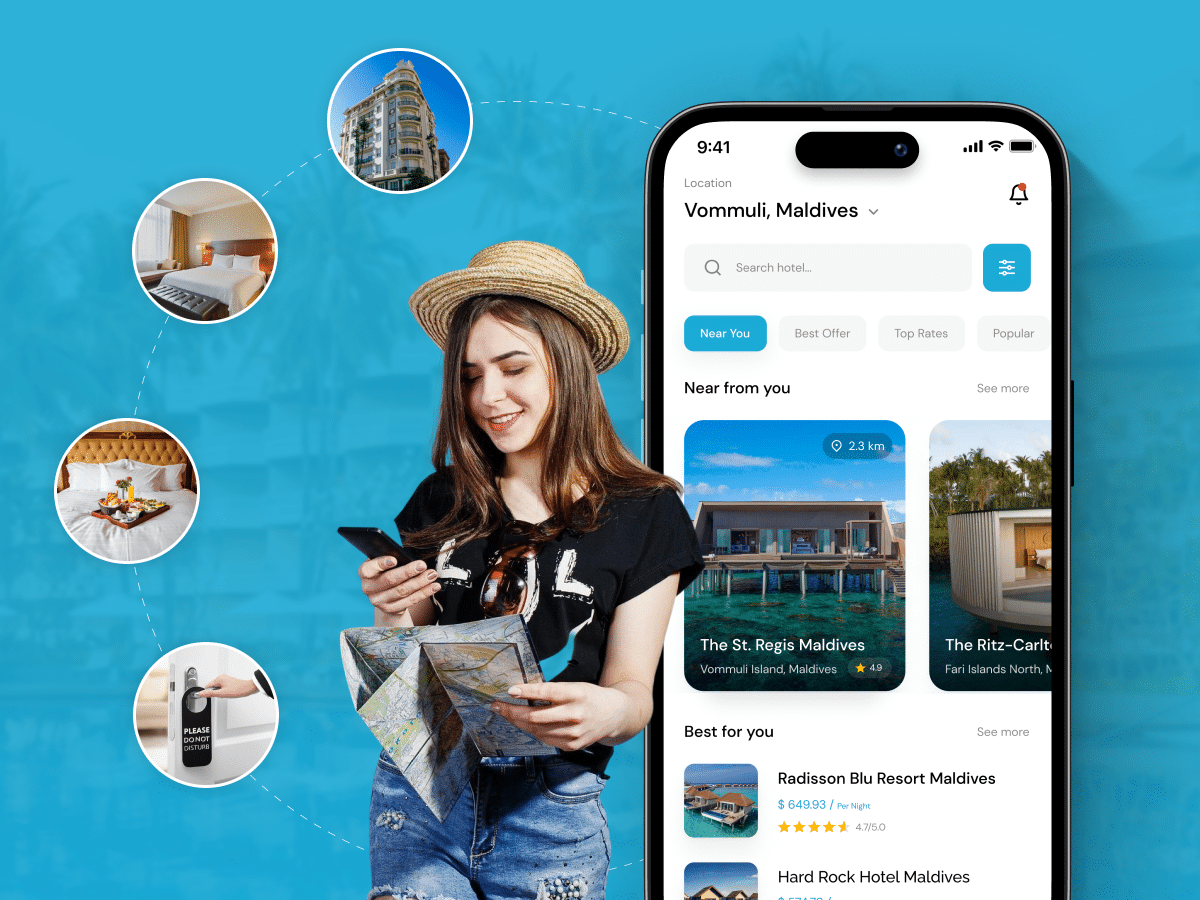

A Complete Guide to Hotel Booking App Development With Cost

-

Ankit Patel

Whether it’s a corporate business trip or a relaxing vacation with friends, finding the right hotel at the right time and a seamless hotel booking experience is not a luxury… Read More